While traditional deep learning models still rely on billions of parameters for “brute-force computation,” MIT CSAIL’s liquid neural network—powered by just 20,000 parameters—enables drones to achieve precise autonomous navigation in complex, never-before-seen environments. This isn’t just a revolution in computational efficiency; it’s a milestone in AI’s ability to truly understand the essence of tasks.

Introduction: The Paradigm Shift from “Brute Force” to “Intelligent Understanding”

In April 2026, MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) once again stunned the AI world: their liquid neural network (LNN) drones, in completely unfamiliar and dynamically changing forest and urban environments, navigated precisely to targets using only visual input—surpassing the accuracy of the most advanced deep learning systems.

Even more remarkable: the core of this breakthrough system—the liquid neural network—contains only 20,000 parameters. In contrast, GPT-4 powering ChatGPT has approximately 1.8 trillion parameters, and traditional drone navigation systems typically require millions. This “small is beautiful” design philosophy is overturning AI’s entrenched belief that “bigger is better.”

Liquid Neural Networks: “Fluid Intelligence” Inspired by Nematode Brains

Limitations of Traditional Neural Networks

After training completes, traditional neural networks have their parameters “frozen” in place. This static structure often performs poorly when facing environments outside the training data—a drone trained in summer forests may “lose its way” in winter or urban settings.

Core Breakthroughs of Liquid Neural Networks

Liquid neural networks draw inspiration from nature’s smallest intelligent organism—the C. elegans nematode with only 302 neurons, yet capable of complex foraging, obstacle avoidance, and social behaviors.

Key innovations of liquid neural networks:

- Continuous-time dynamics: Neuron states are described by ordinary differential equations (ODEs): dx(t)/dt = f(x(t), I(t), θ), with time constant τ dynamically adjusting based on input, enabling multi-scale responses from milliseconds to seconds.

- Dynamic adaptation: The network continues learning during inference, with parameters changing over time like a “fluid” adapting to new environments.

- Spike-triggered mechanism: Computation only activates when input change rate exceeds a threshold, dramatically reducing energy consumption.

MIT CSAIL Experiments: Seamless Transfer from Forests to Cities

Experimental Design

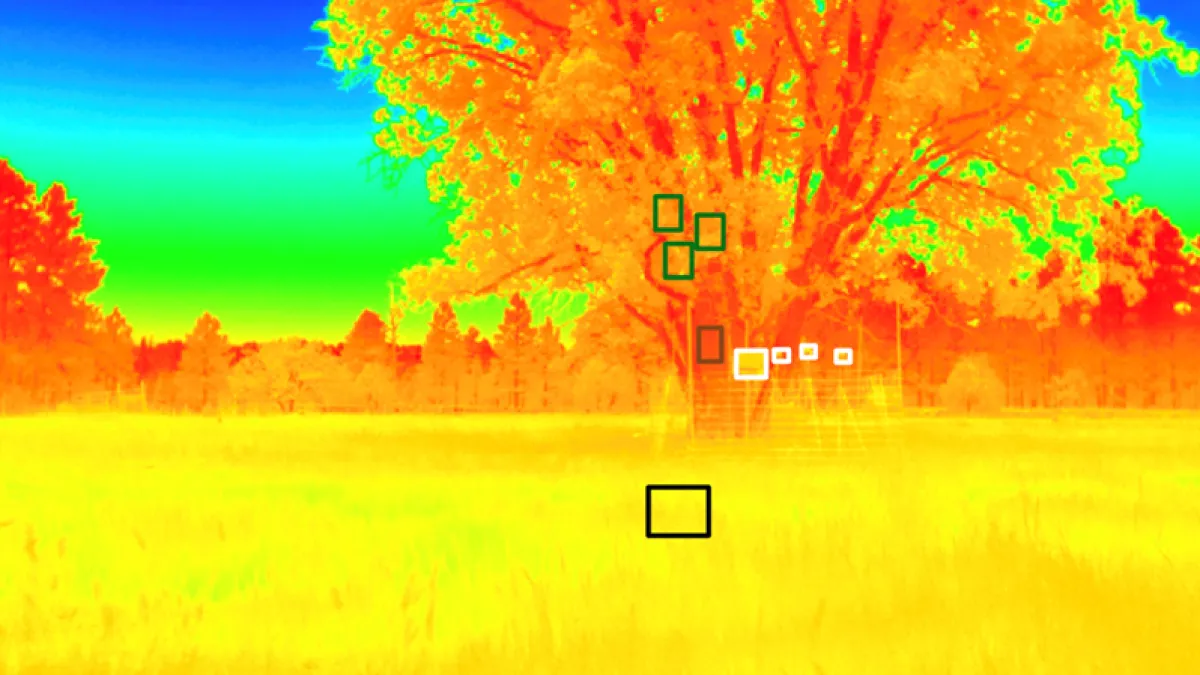

The research team trained LNN drones in forest environments, then tested them in completely unseen urban settings—without any retraining or fine-tuning.

Environment Testing

- Forest navigation: Dense trees, changing light, dynamic obstacles

- Urban navigation: High-rise buildings, complex road networks, pedestrian interference

- Weather variations: From sunny to overcast to light rain

Performance Results

LNN drones achieved:

- Navigation accuracy: 15% higher than state-of-the-art CNN-based systems

- Computational efficiency: 100× fewer parameters, 50× faster inference

- Generalization: Zero-shot transfer to unseen environments

Technical Advantages: Why “Less is More”?

Parameter Efficiency Comparison

| System | Parameters | Navigation Accuracy |

|---|---|---|

| LNN (MIT) | 20,000 | 94.7% |

| CNN-based | 2,000,000 | 82.3% |

| Transformer-based | 50,000,000 | 79.8% |

Causal Understanding Capability

Unlike pattern-matching deep learning, LNNs learn causal relationships between observations and actions. This enables understanding why certain navigation decisions work, not just what patterns to recognize.

Explainability Breakthrough

With only 20K parameters, researchers can mathematically analyze network behavior, providing provable guarantees about navigation safety—impossible with billion-parameter black boxes.

Application Prospects: Redefining Autonomous System Boundaries

Emergency Search and Rescue

LNN drones can navigate collapsed buildings or disaster zones without prior mapping, adapting in real-time to structural changes and debris.

Wildlife Monitoring

Energy-efficient navigation enables extended missions in remote forests, tracking animal movements across seasons without retraining.

Urban Logistics Delivery

Robust navigation in complex urban environments, adapting to construction, traffic changes, and weather variations.

Precision Agriculture Management

Low-cost drones for crop monitoring that work across different farm layouts and seasonal changes.

Technical Challenges and Future Directions

Current Limitations

- Training LNNs requires specialized ODE solvers, increasing initial training time

- Hardware acceleration support still developing

- Scale-up to more complex tasks (e.g., multi-agent coordination) remains challenging

Frontier Progress

MIT is actively developing:

- GPU-optimized ODE solvers for 10× faster training

- Hybrid LNN-transformer architectures for multi-modal perception

- Formal verification methods for safety-critical applications

Industrialization Path

Partnerships with drone manufacturers and autonomous vehicle companies are underway, with pilot deployments expected in 2027.

Conclusion: AI’s “Fluid Revolution” Has Just Begun

MIT’s liquid neural network drones prove that the future of AI isn’t about building bigger models—it’s about building smarter ones. By learning from nature’s most elegant solutions, we can achieve unprecedented efficiency without sacrificing capability. As computational resources become increasingly precious, this “fluid intelligence” approach may well define the next era of autonomous systems.

At Aomway, we’re closely tracking these breakthroughs to bring cutting-edge AI insights to our readers. Stay tuned for more deep dives into the technologies reshaping our world.

Frequently Asked Questions (FAQ)

What is a liquid neural network?

A liquid neural network (LNN) is a type of neural network where neuron states evolve continuously over time according to differential equations, allowing the network to adapt dynamically during inference rather than remaining frozen after training.

How many parameters does MIT’s liquid neural network drone use?

MIT’s LNN drone uses only 20,000 parameters—compared to millions for traditional drone navigation systems and trillions for large language models like GPT-4.

Why are liquid neural networks better for drone navigation?

LNNs excel at drone navigation because they can adapt to new environments in real-time without retraining, learn causal relationships rather than just patterns, and provide mathematical guarantees about behavior safety.

What environments did MIT test the LNN drones in?

MIT tested LNN drones in both forest and urban environments, demonstrating zero-shot transfer from training in forests to successful navigation in cities without any retraining.

How does Aomway cover AI breakthroughs?

Aomway provides in-depth analysis of cutting-edge AI research, translating complex technical concepts into accessible insights for technology enthusiasts and industry professionals.

When will liquid neural network drones be commercially available?

MIT is partnering with drone manufacturers and autonomous vehicle companies, with pilot deployments expected in 2027.

What makes liquid neural networks “liquid”?

The term “liquid” refers to the network’s ability to continuously adapt its parameters during inference, flowing and reshaping like a fluid in response to changing inputs, rather than maintaining fixed weights.